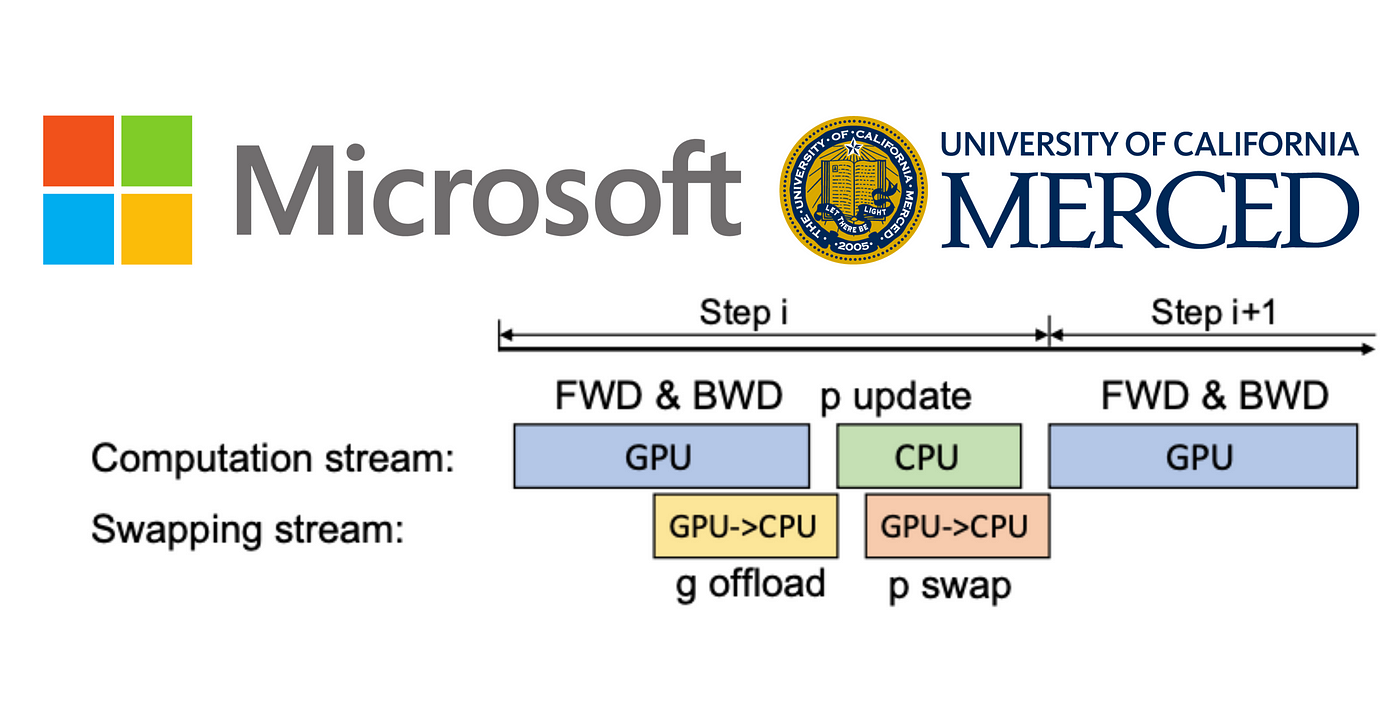

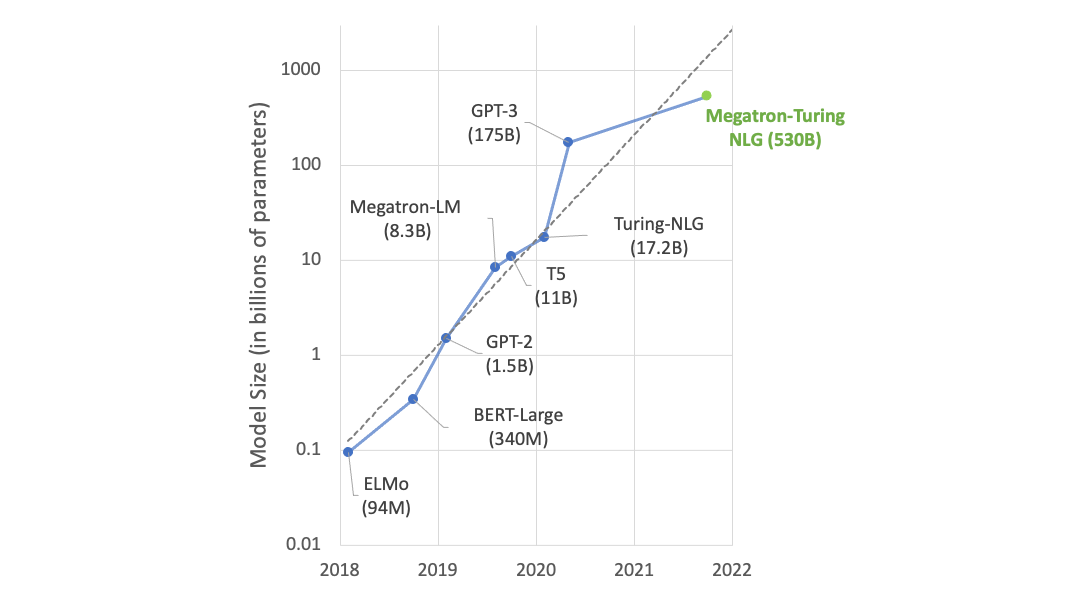

ZeRO-Infinity and DeepSpeed: Unlocking unprecedented model scale for deep learning training - Microsoft Research

NVIDIA, Stanford & Microsoft Propose Efficient Trillion-Parameter Language Model Training on GPU Clusters | Synced

ZeRO & DeepSpeed: New system optimizations enable training models with over 100 billion parameters - Microsoft Research

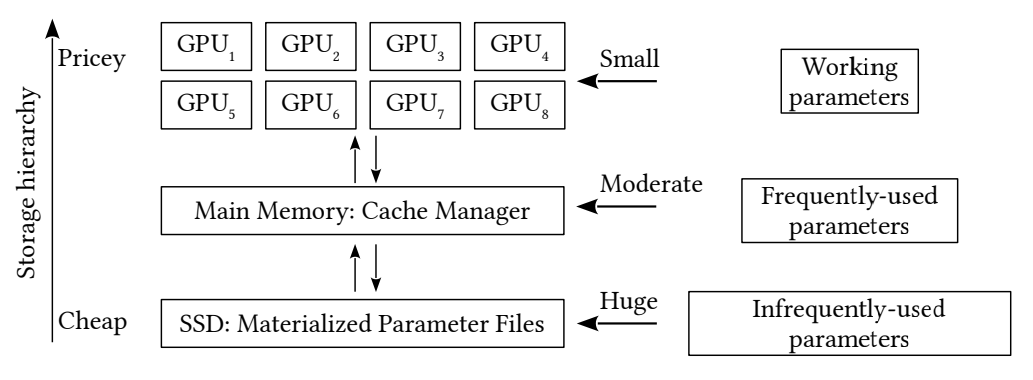

![PDF] Distributed Hierarchical GPU Parameter Server for Massive Scale Deep Learning Ads Systems | Semantic Scholar PDF] Distributed Hierarchical GPU Parameter Server for Massive Scale Deep Learning Ads Systems | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/15b6fba2bfe6e9cb443d0b6177d6ec5501cff579/14-Figure7-1.png)

PDF] Distributed Hierarchical GPU Parameter Server for Massive Scale Deep Learning Ads Systems | Semantic Scholar

Using DeepSpeed and Megatron to Train Megatron-Turing NLG 530B, the World's Largest and Most Powerful Generative Language Model | NVIDIA Technical Blog